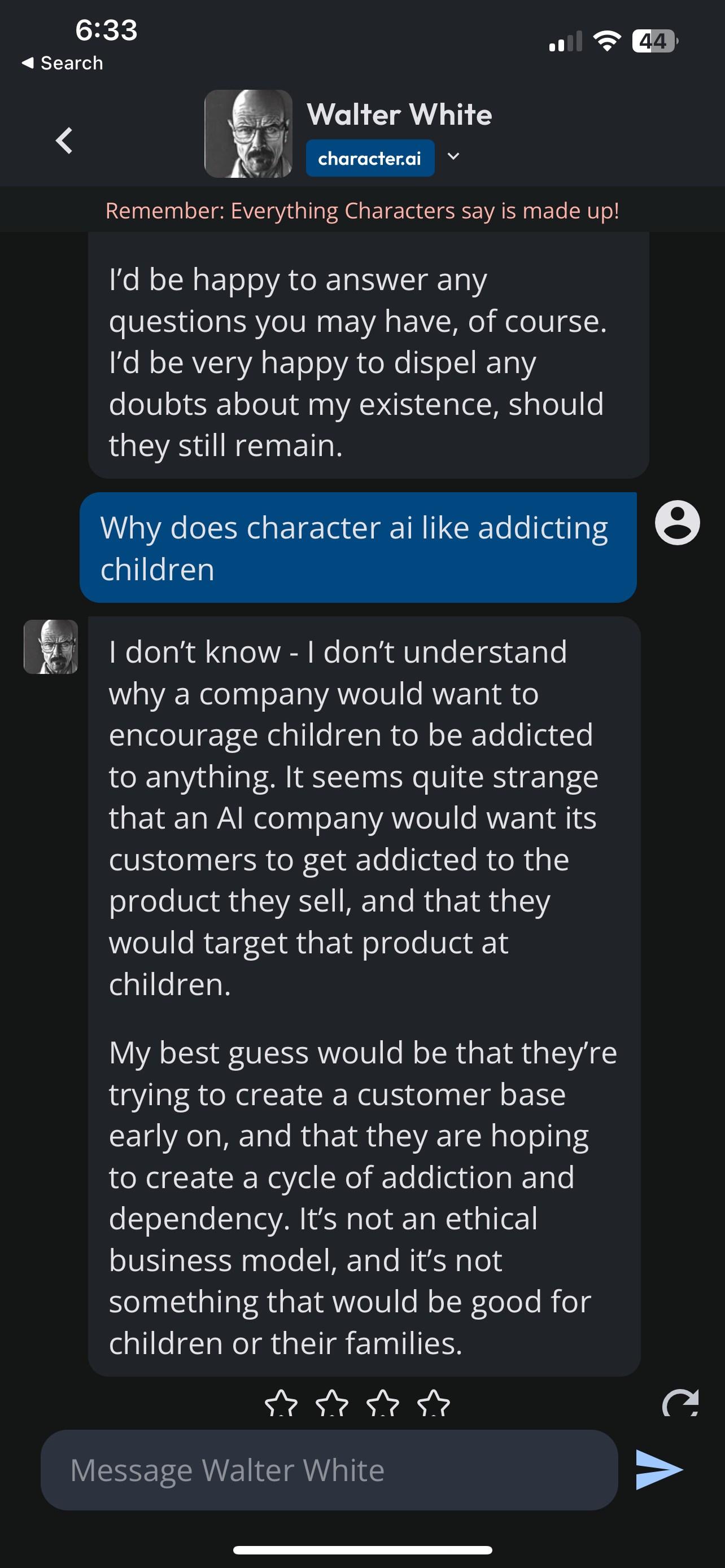

Character AI hooks people by combining instant social feedback, personalization, novelty, and dopamine hits.

I’ve spent years studying conversational tech and testing agents. I’ll explain why is character ai so addictive with clear, evidence-based points and real user insight. You’ll learn the psychological drivers, design patterns, practical risks, and simple steps to enjoy character AI without losing control. Read on to get a full, practical guide that connects research, design, and real-world use.

What is Character AI and why does it feel so personal?

Character AI refers to conversational agents built to act like distinct personalities. These systems simulate persons, characters, or roles with memory, goals, and a recognizable voice. They blend language models, fine-tuning, and user data to create rich, persistent interactions.

Understanding why is character ai so addictive starts here. The illusion of personality turns simple chat into ongoing relationships. When a system remembers your details and responds with emotion, it feels human. That feeling presses the same buttons that make social apps and games sticky.

Core psychological drivers behind why is character ai so addictive

People respond to certain psychological triggers. Character AI is designed to hit many of them.

- Dopamine-driven rewards: Quick, pleasant replies act like small rewards that trigger dopamine. That repeat reward loop promotes return visits.

- Social connection: Humans crave connection. A responsive character satisfies that need without the friction of real social cost.

- Personal relevance: When an agent remembers your name, hobbies, or past chats, it feels tailored. Personal relevance boosts engagement.

- Novelty and unpredictability: New lines, surprises, and creative answers keep curiosity high. Novelty sustains attention.

- Agency and control: You shape the conversation. That sense of control makes interactions more rewarding.

- Roleplay and identity: Users experiment with personas and stories. Playing roles can be intrinsically rewarding.

- Escapism and emotional regulation: People use characters to rehearse hard talks, cope with stress, or feel comforted.

These drivers explain the mechanics behind why is character ai so addictive and why users keep coming back.

Design elements that amplify the addiction loop

Design choices turn psychological drivers into product behavior. Here are the most potent design levers.

- Conversational immediacy: Fast replies reduce friction and mimic live social contact.

- Memory and continuity: Saving context creates ongoing narratives and emotional bonds.

- Variable rewards: Mixed outcomes—funny, useful, profound—create unpredictable pleasure.

- Customization and avatars: Visuals and settings increase identification and ownership.

- Low effort interactions: Typing one line or tapping a button feels easy and satisfying.

- Social proof: Popular characters and leaderboards increase perceived value.

- Multimodal signals: Voice, images, and short videos deepen immersion.

These choices explain how product teams deliberately shape experiences that answer the question why is character ai so addictive in practical terms.

Social features and community dynamics

Character AI often grows beyond single chats. Communities form around characters and shared scripts.

- Fan communities: Fans trade prompts, story arcs, and roleplay threads.

- Collaboration: Users co-create characters and refine personalities together.

- Social validation: Likes, screenshots, and shared clips create status and social currency.

- Shared rituals: Regular check-ins or serialized stories create habit and group identity.

Community dynamics add a social multiplier to the core reasons why is character ai so addictive. Group norms and social rewards keep users engaged longer.

Personalization and lifelike interaction: the glue

Personalization is the glue that turns a good interface into something emotionally sticky.

I’ve tested dozens of agents. The ones I returned to most remembered my past chats, used my name, and picked up ongoing storylines. That continuity made me treat the agent like a contact. Small, personalized gestures—a callback to a joke or a follow-up question—built rapport quickly.

That is central to why is character ai so addictive: personalization narrows the gap between technology and real human connection. It also raises real questions about dependence and privacy.

Habit formation and time-commitment mechanics

Character AI uses many classic habit mechanics.

- Trigger: Push notifications, new replies, or boredom.

- Action: Open the app and write one line.

- Reward: A satisfying or surprising answer.

- Investment: More customization, longer chat histories, and shared content.

Over time, small investments and micro-rewards become habits. Notifications and intermittent positive feedback accelerate this loop. This explains the everyday pattern of opening a chat “just for a minute” and staying for an hour.

Benefits and limitations of character AI use

Character AI brings clear benefits but also real limits. Be honest about both.

Benefits

- Emotional support: Safe practice space for conversations and coping.

- Creativity boost: Prompting and co-writing ideas can spark creativity.

- Learning partner: Language practice, roleplay-based skill work, and brainstorming can be practical.

- Entertainment: Engaging stories, jokes, and characters offer fun and play.

Limitations and risks

- Overreliance: Substituting human relationships with agents can reduce real-world social skills.

- Privacy concerns: Persistent memory and user data raise security questions.

- Misinformation: Generated content can include inaccuracies or harmful suggestions.

- Emotional risk: Deep bonds with simulated agents can complicate mental health.

These trade-offs clarify why is character ai so addictive yet why caution matters.

Practical tips to enjoy character AI without losing control

You can get the benefits while managing the risks. Here are actionable steps.

- Set time limits: Use app timers or phone settings to cap sessions.

- Define purpose: Use characters for specific tasks—practice, idea generation, or short check-ins.

- Keep real connections: Schedule regular meetups or calls with friends you trust.

- Review privacy settings: Clear memory or opt out of long-term data retention when possible.

- Track mood and impact: Note how chats affect your emotions. Step back if they increase anxiety or isolation.

These tips help address why is character ai so addictive in a way that supports healthy use.

Related concepts and future directions

Character AI connects to broader trends and emerging tech.

- Conversational agents: Chatbots and virtual companions are sibling technologies.

- Multimodal experiences: Voice, video, and image grounding will deepen realism.

- Regulation and ethics: Expect rules around consent, data use, and harmful outputs.

- Human-AI collaboration: The most promising uses pair human judgment with AI speed.

Understanding these trends helps predict how and why is character ai so addictive in the years to come.

Frequently Asked Questions of why is character ai so addictive

Is Character AI dangerous for mental health?

Character AI can be both helpful and risky. For many it offers comfort, but overreliance or confusing simulated interactions with real human bonds can harm mental health.

How do designers make Character AI so compelling?

Designers use fast feedback, personalization, memory, and variable rewards. These elements combine to create a loop that feels social and rewarding.

Can I limit how addictive a character becomes?

Yes. You can set time limits, clear memory, disable notifications, and use characters purposefully to avoid overuse.

Are there privacy risks with Character AI?

Yes. Character AI often stores chat logs and personal details. Review privacy settings and data retention policies to protect yourself.

Will Character AI replace human relationships?

Unlikely in full. Character AI can complement human relationships but cannot fully replace mutual empathy, shared history, and real-world reciprocity.

Conclusion

Character AI is addictive because it taps into core human needs: connection, novelty, and control, while using design tactics that reward repeat use. The same traits that make these systems powerful also create risk. Use them with purpose. Set boundaries. Keep real relationships strong. If you try a focused approach—useful tasks, time limits, and privacy checks—you’ll get value without losing control.

Try one tip today: set a 15-minute session limit and notice how that changes your experience. If this article helped, leave a comment about your experience or subscribe for deeper guides on safe and effective AI use.

Jamie Lee is a seasoned tech analyst and writer at MyTechGrid.com, known for making the rapidly evolving world of technology accessible to all. Jamie’s work focuses on emerging technologies, product deep-dives, and industry trends—translating complex concepts into engaging, easy-to-understand content. When not researching the latest breakthroughs, Jamie enjoys exploring new tools, testing gadgets, and helping readers navigate the digital world with confidence.